What is an AI Audit - How to Prove Your Team's AI Usage Is Compliant?

An employee copies customer data into ChatGPT to draft a quick response. It saves time, but that data is now outside the company's control. This is how AI risks often begin — quietly and without visibility.

The use of tools like ChatGPT has grown rapidly across teams. But this convenience comes with real concerns around data exposure, compliance, and accountability. According to IBM, the average cost of a data breach exceeds $4 million, making even small risks costly. At the same time, studies show that employees often share sensitive information with AI tools without realizing the impact.

Governments are now responding with stricter oversight. Countries like the United Arab Emirates have introduced AI governance frameworks that focus on transparency, accountability, and responsible usage. Organizations are expected to know how AI is being used within their teams and ensure it aligns with these expectations.

To keep up, companies need clear visibility into AI usage. They must define rules, monitor activity, and reduce the risk of sensitive data exposure. This is where AI audits come in.

In this post, we'll break down why AI audits are essential and how you can prove your team's ChatGPT usage is compliant.

Real-World ChatGPT Data Leaks

The risks of using ChatGPT without proper controls are not theoretical. Several real-world incidents show how easily sensitive data can be exposed:

Samsung Source Code Leak

In 2023, employees at Samsung unintentionally leaked confidential information by using ChatGPT. Engineers pasted internal source code and meeting notes into the tool to debug and summarize content.

This data was then processed externally, raising concerns about intellectual property exposure. Following the incident, Samsung restricted the use of generative AI tools within parts of the organization.

Confidential Data Shared by Employees

Research by LayerX found that employees frequently paste sensitive data into AI tools. This includes customer information, internal documents, and financial details, often without realizing the compliance risks. Without monitoring, organizations have little visibility into what is being shared.

What Is an AI Audit?

An AI Audit is a detailed and structured examination of how your AI systems are created, trained, and used. It systematically checks the AI tools to evaluate ethical and legal compliance, and focuses on transparency, security, and overall performance. Global AI regulations are creating immense pressure on organizations to comply. Companies now need to closely review how AI tools are used across their teams and they must ensure these practices align with emerging legal and ethical standards.

Earlier, auditing methods relied on manual human inspections of documents, but now these methods are unreliable for current businesses. Organizations these days process huge amounts of data that must be examined systematically. Today's businesses require AI auditing that is a mix of artificial intelligence and machine learning. While traditional auditing methods rely on slow and outdated processes, the automated ones are designed with enhanced capabilities for complex auditing.

Why Does AI Audit Matter?

The importance of AI audits is clear — they are no longer optional, but mandatory. Most of the AI regulations are going to be enforced by August 2026, and then organizations will have to face compliance fines if their AI usage is not in compliance. Besides being compliant, regular AI audits act as safeguards against ethical, legal, and operational risks. AI audits matter because they help with:

Compliance and Ethical Responsibilities

Global AI regulations require organizations to follow strict rules. Failure to comply can result in fines worth millions of dollars and even business suspensions. AI auditing helps ensure that AI usage is in compliance and follows ethical standards such as non-discrimination, fairness, and data privacy.

Preventing Security and Operational Issues

Unmonitored AI can result in data leaks which can cause security risks and vulnerabilities. AI audit ensures that your systems are protected against data leaks, theft, and other risks. Plus, it also helps in spotting errors and enhancing overall security and performance.

Ensuring Transparency for Clients

Clients, stakeholders, and regulators demand transparency into how AI is used. Auditing provides visibility and builds trust. It shows how decisions are made and ensures accountability across teams. This level of clarity also helps organizations respond confidently to audits and regulatory reviews.

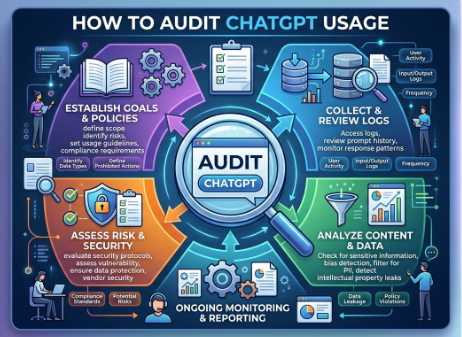

How to Audit ChatGPT Usage?

Organizations are rapidly adopting OpenAI's ChatGPT because of the benefits it offers. Employees use this AI tool for drafting emails, analyzing market trends, summarizing financial reports, and even generating code. However, most organizations focus on only the benefits of ChatGPT, and not the risks. So, what are the risks associated with ChatGPT usage in an organization? How to audit ChatGPT usage so your company remains compliant and safe from data leaks?

The following are the basic steps which you must take to audit ChatGPT usage at your workplace:

- Step 1: Start by looking at how your team currently uses ChatGPT, Claude, and other tools. Identify risks, sensitive data exposure, and areas where compliance could be improved.

- Step 2: Select a platform that can track ChatGPT prompt activity, enforce policies, and integrate with your existing workflows.

- Step 3: Organize your internal AI usage rules and connect the monitoring tool to your systems. This ensures that ChatGPT usage is visible and auditable in real time.

- Step 4: Run your first audit to see how the team is using ChatGPT. This baseline shows where sensitive data might be at risk and which practices need adjustment.

- Step 5: Keep monitoring ChatGPT activity regularly. Continuous auditing helps catch potential risks early, ensures compliance, and keeps AI usage aligned with company policies.

How to Prove Your Team's ChatGPT Usage Is Compliant?

Now that you understand how to audit ChatGPT usage, it's time to see how to prove your team's compliance. Using any auditing tool like SilentGuard, you can demonstrate your team's ChatGPT compliance easily. To do so you must:

- Produce ready-to-submit reports that follow legal and industry standards.

- Include tools that clarify how AI makes decisions, so teams can understand its outputs.

- Reduce manual review by automating compliance tasks, saving time and effort for staff.

Organizations are proactively performing AI audits even in those areas where auditing is not necessary. This is done for two main reasons: to improve reputation and to make a positive societal impact. By proactively auditing AI systems, companies can meet legal and ethical standards and build trust with employees and clients. This reliability and trust not only affects buying behavior and loyalty, but also helps avoid penalties.

How Can SilentGuard Solve the Problem?

To audit ChatGPT usage, businesses must rely on a reliable and structured approach. Traditional audits rely on periodic, manual reviews and often focus on sample testing. This makes them slow and unable to capture real-time risks.

On the other hand, automated AI auditing is continuous and data-driven. It provides ongoing visibility into how AI tools are being used across teams. SilentGuard enables this shift by allowing organizations to monitor usage in real time and maintain consistent oversight. Here's how SilentGuard helps with AI auditing:

Provides Visibility Into Shadow AI Usage

SilentGuard allows you to gain visibility into how ChatGPT is being used across different devices and apps. It also helps understand usage patterns and identify blind spots to eliminate shadow AI completely.

Prevents Data Leaking into ChatGPT

SilentGuard enables organizations to prevent sensitive data from being shared with ChatGPT. It detects and blocks restricted information before it leaves the organization. It also gives real-time alerts which helps in preventing leaks.

Maintains Detailed Activity Logs

It helps you keep a complete record of AI interactions. Later, these logs help track usage and support internal reviews when needed.

Supports Audit Readiness with Forensic Evidence

You can organize usage data into structured records with the help of SilentGuard. This makes it easier to demonstrate compliance during audits and regulatory checks. You can use the logs, screenshots, and alerts to get all the evidence of shadow AI usage.

Using SilentGuard for AI Auditing

To use SilentGuard for AI auditing, you just have to integrate it with ChatGPT or any other AI tool. Here's how to do it:

- Step 1: Add SilentGuard to ChatGPT by integrating it via API or a browser extension. The setup is simple and takes only a few minutes.

- Step 2: SilentGuard captures prompts directly on the device. It checks for sensitive data using pattern matching and a local language model.

- Step 3: Define your guardrails, and let your team work with AI as they normally do. SilentGuard blocks what shouldn't be shared and maintains logs for compliance.

With SilentGuard, you can bring continuous visibility and transparency into AI usage at your organization. Book a Demo and start doing AI audits to stay safe, compliant, and future-ready.

Best Practices for AI Auditing

To implement AI auditing effectively, organizations can follow structured methods and proven approaches:

- Adopt recognized AI standards: Frameworks like NIST AI RMF, ISO/IEC 42001, and OECD AI Principles provide guidance for responsible and reliable AI. Use these as a blueprint for designing consistent audit processes.

- Establish a continuous audit cycle: AI auditing is ongoing, not a one-time task. Implement a cycle covering planning, testing, monitoring, and updating models to ensure systems stay compliant as they evolve.

- Maintain detailed audit records: Keep clear logs of model outputs, input data, and retraining events. This ensures accountability and makes regulatory reviews or external audits smoother.

Conclusion

AI auditing is no longer optional — it's essential for ensuring responsible, compliant, and transparent use of tools like ChatGPT. By following structured frameworks, maintaining clear records, and implementing continuous monitoring, organizations can stay compliant with the recent AI regulations.

With SilentGuard, you can monitor ChatGPT usage in real time, enforce internal policies, and generate audit-ready reports effortlessly. Protect your organization and give leadership the visibility they need — start using SilentGuard today!

---

Expert Perspective

> "Organizations that treat AI auditing as a one-time compliance checkbox will be caught flat-footed. Effective AI governance requires continuous monitoring, not just periodic reviews. The question isn't whether a breach will occur — it's whether you'll have the audit trail to understand it when it does."

>

> — Andrew Burt, Chief Privacy Officer at Immuta and former FBI cybersecurity attorney

---

References & Sources

1. IBM 2025 Cost of a Data Breach Report — Average cost of a data breach exceeds $4 million; AI-related incidents incur an additional $670,000 on average. Read the report

2. NIST AI Risk Management Framework (AI RMF 1.0) — The gold standard for AI audit planning, covering governance, mapping, measurement, and management. Read the framework

3. ISO/IEC 42001:2023 — International standard for AI management systems, providing audit and certification requirements for responsible AI. ISO standard overview

4. LayerX Enterprise Security Report — Research showing employees routinely paste customer information, internal documents, and financial details into AI tools without recognizing compliance risks. Read the report

5. EU AI Act (August 2026) — Requires organizations to maintain technical documentation, audit logs, and human oversight records for high-risk AI systems. European Parliament overview

6. OECD AI Principles — Guidelines on AI accountability and auditability adopted by 46+ countries, forming the basis for many national AI audit frameworks. OECD AI Policy Observatory

Secure your AI workflows today

Learn how SilentGuard can protect your enterprise from data leakage without slowing down your teams.